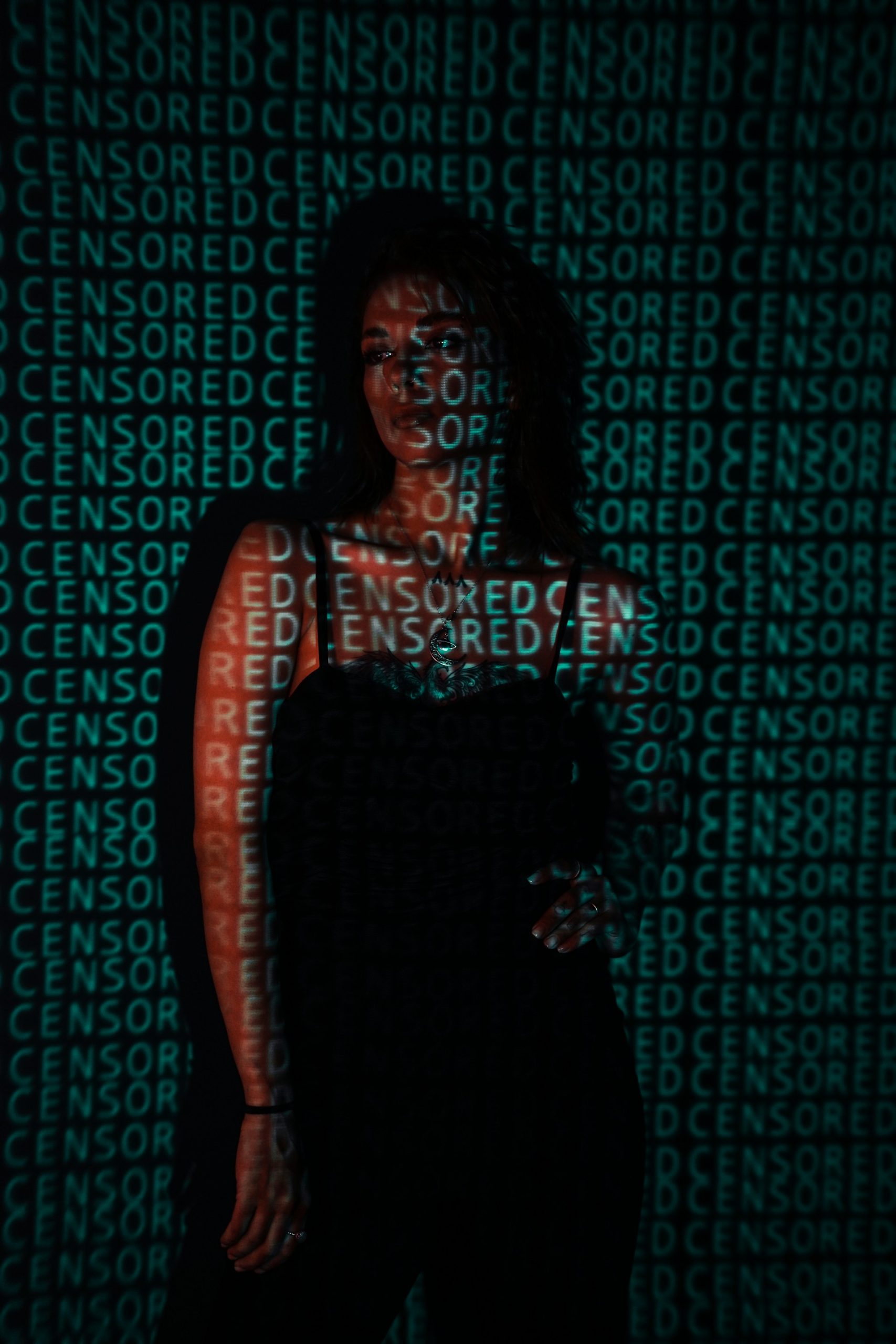

Comment Writer Lucy Mould sheds light on the injustice faced by women as a result of ‘nudifying’ AI chatbots and the shocking lack of legal protections offered to them in response

X’s AI chatbot, Grok, has been the epicentre of Elon Musk’s first controversy of 2026. This feature of Musk’s app has been used to ‘nudify’ women and girls on the platform at an alarming rate, using AI to digitally undress them and create sexualised deepfake images, prompting an ongoing investigation by Ofcom to determine whether X has broken the law under the Online Safety Act. This is a society-wide issue. We must all be angry… We absolutely cannot look away

Despite it being illegal in the UK to share intimate material of somebody without their consent, also known as ‘revenge porn’, by January 8th, up to 6,000 demands for bikini photos were being made to the chatbot every hour. Countless women and children have already been violated on X by Grok’s compliance to requests from other users to generate photos of them undressed, covered in white fluids, and/or in sexually suggestive positions. Do women deserve nudification simply for the radical act of daring to show their faces in public, whether online or offline?

In response to the justified public outrage, Musk belatedly introduced measures to supposedly control users’ ability to create and edit images using the Grok account on X, including limiting this feature to paid subscribers, and geoblocking the ability to nudify images in jurisdictions where it is illegal to create these images without consent. As of February 6th, these laws would apply in the UK, yet it still remains unclear whether a VPN would be sufficient to negate this block, or whether users would still be able to view content of this kind created by others. Women and girls are consistently left further behind than their male counterparts

The fact is, law and policy move at a glacial pace in comparison to the lightning-fast nature of technological advancement. The AI industry seems to move without regulation while the rest of the world scrambles to keep up, and women and girls are consistently left further behind than their male counterparts. Furthermore, putting features behind paywalls does not stop abuse; it just puts the ability into the hands of those with enough money. Monetising the abuse of women is just another way for Musk to cling to his billionaire status in absence of a real personality, with no regard for who it harms along the way.

I find it unsurprising, though nonetheless disappointing, that Musk’s provisions cater only to the law, rather than addressing the real harms experienced by women globally affected by AI’s ability to produce personalised pornography at the click of a button. In my opinion, it is shameful that as a society, violence against women and girls must be denoted as a crime before it is considered important to act on.

Nigel Farage, who earned over £9,000 from X in 2025, said that the situation was ‘horrible’, but that the suggestion of banning X in the UK, a possibility depending on the outcome of the Ofcom report, was ‘appalling’ and an attack on free speech. I struggle to see how it is currently possible for women to utilise their right to free speech on X when anything they say may be countered with a degrading image of their likeness in a string bikini, covered in white liquid, bent over at the request of anyone who sees fit to shut her up. In these circumstances, whose speech is actually free: female targets of deepfakes or those making them? Violence against women and girls must be denoted as a crime before it is considered important to act on

The harms of AI are innumerable and complicated, but overwhelmingly target women. 96% of all deepfakes generated are non-consensual sexual deepfakes, and 99% of those are made using images of women. The rise of the pornography industry already gave men an atmosphere to fantasise about women never saying ‘no’. They now have the tools to create the material themselves, with a lack of consent built into the very act. This lack of consent may even be what makes deepfakes so attractive in the first place. I would argue they are expressions of control and power, more sinister than merely erotic material. They are a policing measure to make the Internet hostile to women, just as catcalling and the threat of assault make the public sphere hostile to women, just as sexual harassment makes the professional sphere hostile to women. When it hurts too much to view the material to report it, as one woman who had over 100 images generated of herself felt, it is easier to give up or log off. Again, I ask you: whose freedom and speech is being protected?

We must weigh up the importance of so-called ‘free speech’ against women’s safety, and remember societal progress is not straightforward. Many issues related to AI’s proliferation are seemingly symptomatic of a culture where progress is defined in terms of profit, instead of improvements in quality of life. We must support the women already violated by this technology, and act quickly to ensure no more are harmed.

AI has gone too far unconstrained, but it is not too late. To me, one of the worst mistakes we can make is to assume that the only people generating deepfakes are loners restricted to the fringes of society, constantly tugging themselves off in filthy bedrooms and never touching grass. This is a society-wide issue. We must all be angry.

In an economy of attention, where our capacities to care are stretched thin by a constant barrage of information, choosing where to direct your gaze is a valuable decision. We absolutely cannot look away.

If you liked this, read more from Redbrick Comment:

Wicked, Weight-Loss and ‘Well-Intentioned’ Comments

Comments